I got talked into going to SC again this year, as I have almost every year since 2009.

It’s not really my area of focus, but it’s always interesting.

UK’s presence featured a mixture of IT/CCS types and researchers, the research end of the booth was mostly focused on ongoing Parallel Bit Pattern Computing work, featuring a new demo/visualization thing that I built much of the hardware and some of the software for in the 3 weeks before we left for the conference (the rest came from Hank modelling some printable parts, pulling the compute engine from an older demo he wrote, and building some adapter code). It was more exhausting than the conference itself, but a really fun prototyping/micomanufacturing flex. Fancy 3D printed parts, 2020 extrusion, some laser cut bits, piles of addressable LEDs, a bit of embedded electronics. There is also a partially-functional prototype backplane to link 4 EBAZ425 FPGA boards through an Aggregate Function Network as a PBP substrate that …I designed. There’s a lot of not-my-job work I did on display; I should probably start throwing more of a fit about that.

Some notes about the stuff we were showing and interesting(?) industry observations below.

The punchline on the whole PBP thing is that it’s a different way of accomplishing the only thing that programmers are really excited about quantum for: the possibility of getting to cover n of the problem space of a Hard problem in log(n) operations. In a quantum machine, that is (theoretically) accomplished by getting 2n state for each of n entangled q-bits, but you make a horrible trade-off in that quantum machines’ state vector can only be sampled not fully read out, they don’t contain stable storage, and the cycle time is necessarily slow to remove errant energy. PBP promises a similar benefit, but does it by bit-level optimizing the code – it computes over symbolic chunks of n bits (8 in the demo, 1024 in the fanciest prototype underway) and does rle/regex type manipulations to eliminate redundancies. It does exact-width analysis on arithmetic, so it can not activate unnecessary gates – imagine you write code like for(int i = 0; i<100;i++) – It’s not hard to statically determine that i requires a 7-bit incrimenter, not a machine-word-wide fast adder, and on a bit-serial backend you can simply not run the carry chain for more than 7 cycles. PBP systems also cache recent operations, so redundant block-sized operations get factored and reused. It’s pretty mind-bending, but we’re a good 6 years into thinking about it at UK and it keeps working out.

This makes a PBP processor both an extremely efficient bit-serial SIMD machine (think the progeny of a mid-80s bespoke high end architecture like a ThinkingMachines CM-2, Cray-2 , or MasPar, as well as a bunch of more recent but less prominent in-memory compute designs), and a perfect substrate for running quantum-inspired algorithms, because the state vectors turn out to not be very entropic (and specifically, it’s likely exponentially hard to add entropy to them, so they MUST not be), so a PBP machine can maintain them in very little actual computation. IMO we’ve had more examples of quantum-inspired algorithms doing well than actual quantum wins in the broader literature, so it seems like a worthy thing to lean in to.

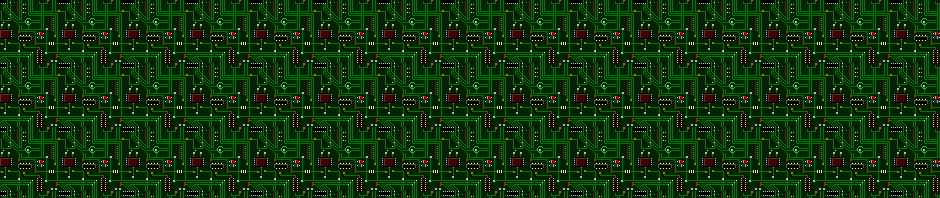

The KES demo is showing the state-vectors of 6 pbits (like a qubit, but not) being operated on, with the idle stored bits in blue, the constant-for-a-whole-pattern blocks in white, the blocks than can be handled via symbolic analysis in green, blocks pulled from the cache in yellow, and bits that actually have to be touched to maintain the state-space in red. It makes it extremely visible that there are not very many red bits.

Conference wide, there were some interesting absences, affiliation changes, etc. No citations, all shit talking:

Nvidia/Mellanox didn’t have a huge presence of their own, they just intruded into almost everything else, and conspicuously announced the successor to a high-end product they’re still having trouble delivering the last version of during the fist day.

IBM had both their Quantum stuff and RedHat almost invisible in contrast to those being the big things the last few years. The only big piece of hardware in their booth was a …tape library unit… SuSE, Alma, Oracle, and CIQ/Rocky were all very visible. The HPC community was one of the most-pissed when RH/IBM pulled the whole get control of CentOS, lull ScientificLinux to name CentOS as a successor, then rug-pull the utility of CentOS as a standard base (twice) thing. That presence probably means that everyone is now comfortable with the idea that we need a new standard base enterprise Linux with a more open governance structure, and it is likely to be OpenELA.

Many of the sysadmin types tell me that ROCm (AMD’s GPU type device stack) is now actually capable of running most useful workloads, so apparently Nvidia buying PGI under circumstances that make it hard to imagine they were doing anything other than trying to stop the release of a portable CUDA toolchain bought them about a decade of moat. It may just be that the higher level languages (torch, orca) got popular and all have AMD backends because of the really big HPC facilities’ investments rather than any real same layer compatibility.

D-Wave was no where to be seen, but a bunch of new quantum startups with more method variety have cropped up, I think in response to the settling (annealing) based stuff not turning out to be very useful and some vaguely promising results on photonic mechanisms out of China. The company that builds the chandelier looking chillers for many of the big quantum players had their own little booth this year, which I suppose in the standard analogy (and reality) is the sheet-metal stamper peeking out from behind the shovel salesman.

It was a rehash from an earlier conference paper, but the open secret that the proxy benchmarks for the big codes the national labs are running are now like 75% memory fetches and integer math seems to be fully out. They spend all their time pointer chasing in the pivot tables for sparse matrices and the fancy FP hardware barely matters because, as always in a modern context, everything is dominated by hard-to-predict memory accesses.

And of course the number of companies selling AI snakeoil was at a record high. AI services. AI accelerators comprised of FP8 hardware that doesn’t even give you an order of magnitude and doesn’t encode a NaN so you can’t tell you’re operating entirely on garbage bits. AI consulting. Cryptobros feeling out the new AI hustle. It’s like the “big data” cycle a little over a decade ago, but instead of believing any sufficiently large pile of shit contains a pony with probability approaching one, they just …hope the glorified pattern recognition engines will find them a pony if fed their data? And they might be right, but the pony will probably be a hallucination.

…and apparently I picked up a 2nd round of COVID on the trip. Fabulous.